Artificial Intelligence

Enabling customers to deliver production-ready AI agents at scale

Today, I’m excited to share how we’re bringing this vision to life with new capabilities that address the fundamental aspects of building and deploying agents at scale. These innovations will help you move beyond experiments to production-ready agent systems that can be trusted with your most critical business processes.

Beyond accelerators: Lessons from building foundation models on AWS with Japan’s GENIAC program

In 2024, the Ministry of Economy, Trade and Industry (METI) launched the Generative AI Accelerator Challenge (GENIAC)—a Japanese national program to boost generative AI by providing companies with funding, mentorship, and massive compute resources for foundation model (FM) development. AWS was selected as the cloud provider for GENIAC’s second cycle (cycle 2). It provided infrastructure and technical guidance for 12 participating organizations.

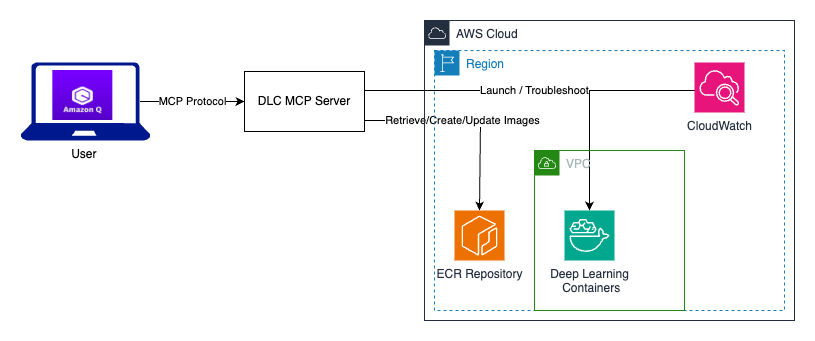

Streamline deep learning environments with Amazon Q Developer and MCP

In this post, we explore how to use Amazon Q Developer and Model Context Protocol (MCP) servers to streamline DLC workflows to automate creation, execution, and customization of DLC containers.

Build an AI-powered automated summarization system with Amazon Bedrock and Amazon Transcribe using Terraform

This post introduces a serverless meeting summarization system that harnesses the advanced capabilities of Amazon Bedrock and Amazon Transcribe to transform audio recordings into concise, structured, and actionable summaries. By automating this process, organizations can reclaim countless hours while making sure key insights, action items, and decisions are systematically captured and made accessible to stakeholders.

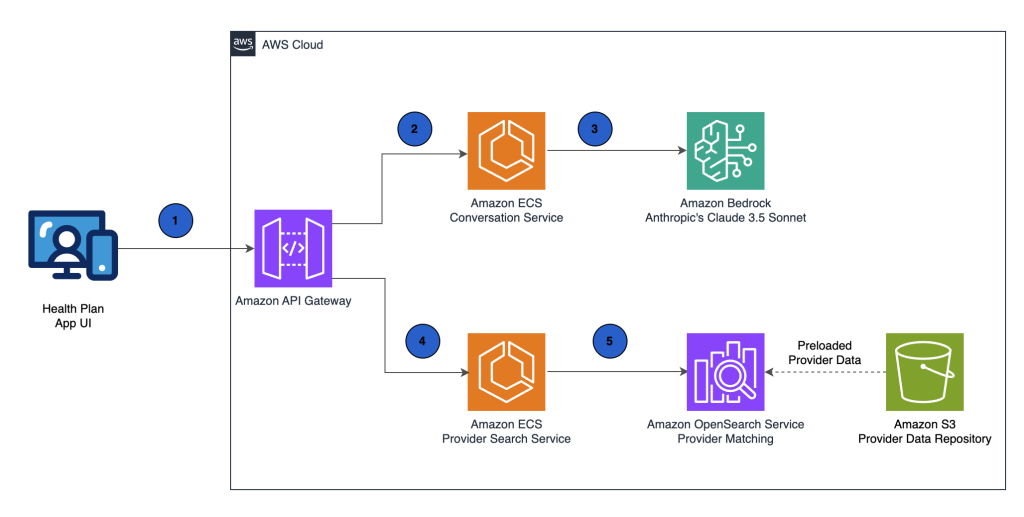

Kyruus builds a generative AI provider matching solution on AWS

In this post, we demonstrate how Kyruus Health uses AWS services to build Guide. We show how Amazon Bedrock, a fully managed service that provides access to foundation models (FMs) from leading AI companies and Amazon through a single API, and Amazon OpenSearch Service, a managed search and analytics service, work together to understand everyday language about health concerns and connect members with the right providers.

Use generative AI in Amazon Bedrock for enhanced recommendation generation in equipment maintenance

In the manufacturing world, valuable insights from service reports often remain underutilized in document storage systems. This post explores how Amazon Web Services (AWS) customers can build a solution that automates the digitisation and extraction of crucial information from many reports using generative AI.

Build real-time travel recommendations using AI agents on Amazon Bedrock

In this post, we show how to build a generative AI solution using Amazon Bedrock that creates bespoke holiday packages by combining customer profiles and preferences with real-time pricing data. We demonstrate how to use Amazon Bedrock Knowledge Bases for travel information, Amazon Bedrock Agents for real-time flight details, and Amazon OpenSearch Serverless for efficient package search and retrieval.

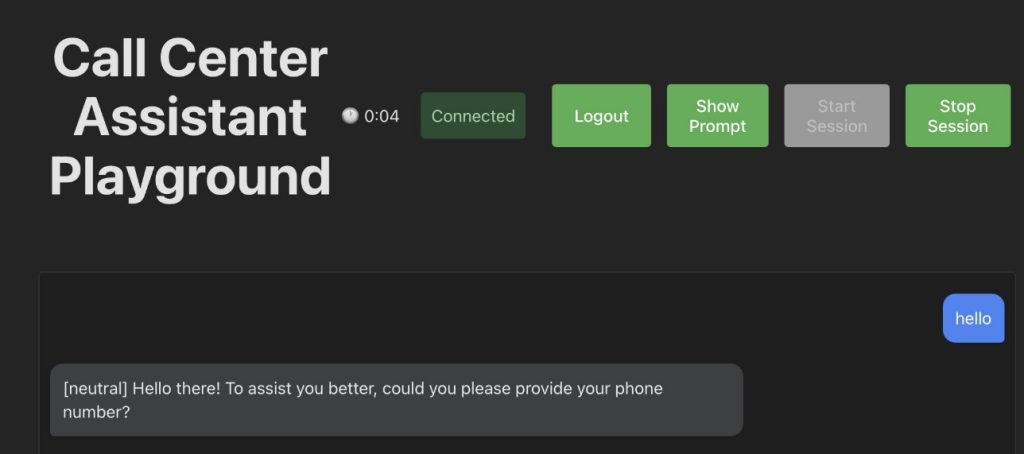

Deploy a full stack voice AI agent with Amazon Nova Sonic

In this post, we show how to create an AI-powered call center agent for a fictional company called AnyTelco. The agent, named Telly, can handle customer inquiries about plans and services while accessing real-time customer data using custom tools implemented with the Model Context Protocol (MCP) framework.

Manage multi-tenant Amazon Bedrock costs using application inference profiles

This post explores how to implement a robust monitoring solution for multi-tenant AI deployments using a feature of Amazon Bedrock called application inference profiles. We demonstrate how to create a system that enables granular usage tracking, accurate cost allocation, and dynamic resource management across complex multi-tenant environments.

Evaluating generative AI models with Amazon Nova LLM-as-a-Judge on Amazon SageMaker AI

Evaluating the performance of large language models (LLMs) goes beyond statistical metrics like perplexity or bilingual evaluation understudy (BLEU) scores. For most real-world generative AI scenarios, it’s crucial to understand whether a model is producing better outputs than a baseline or an earlier iteration. This is especially important for applications such as summarization, content generation, […]

Building cost-effective RAG applications with Amazon Bedrock Knowledge Bases and Amazon S3 Vectors

In this post, we demonstrate how to integrate Amazon S3 Vectors with Amazon Bedrock Knowledge Bases for RAG applications. You’ll learn a practical approach to scale your knowledge bases to handle millions of documents while maintaining retrieval quality and using S3 Vectors cost-effective storage.